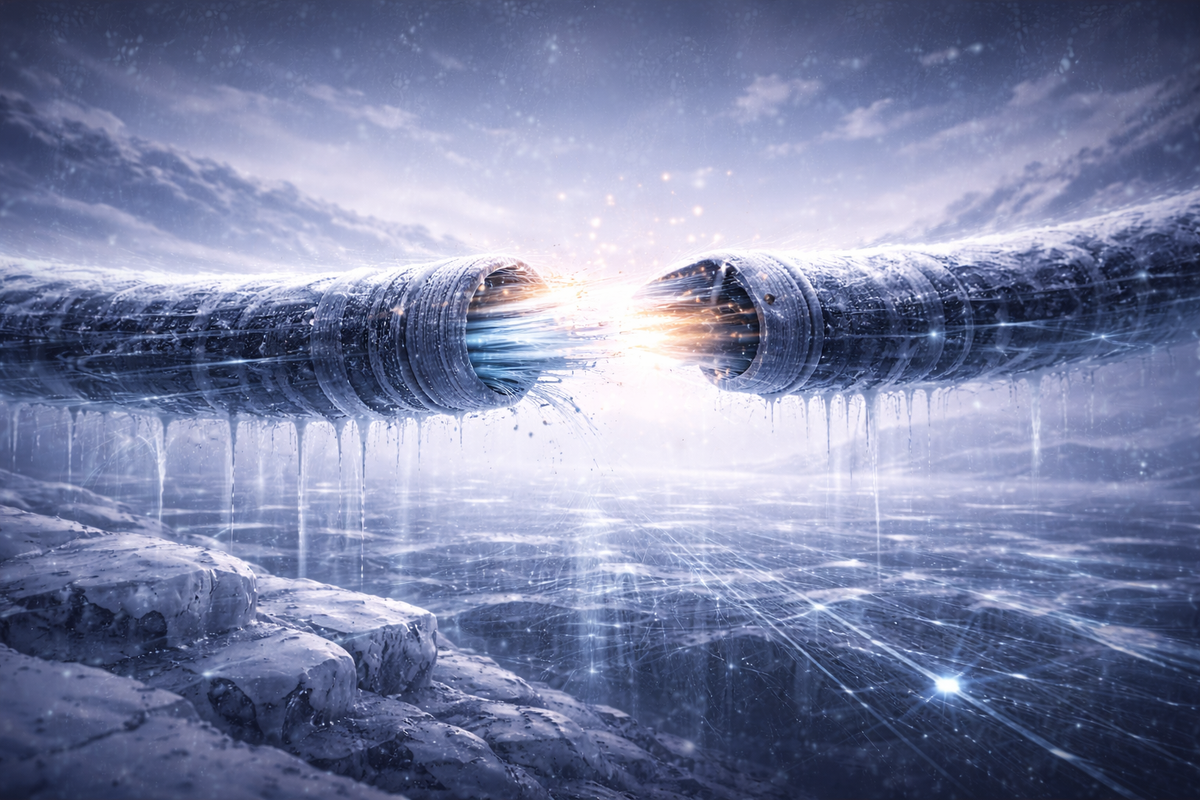

Data sovereignty winter: why governments are freezing the open internet

Governments now frame open data flows as a security threat. Under the banner of sovereignty, rules meant to protect citizens are fragmenting the internet, slowing research, narrowing dissent, and shifting power upward—often without real oversight.

How control over data flows is reshaping who can connect, collaborate, and compete

Around the world, the doors of the global internet are slamming shut, turning what was once a borderless information highway into a series of locked national bunkers. This “data sovereignty winter” is no accident of technology or market forces, rather, it is a calculated power grab, dressed up in the sterile language of “national security” and “compliance.”

From Washington to Brussels to Beijing, leaders who once cheered the free flow of data as the very spirit of innovation now treat it as a weapon to be hoarded, a threat to be contained, and also as a lever to crush dissent. And the infuriating part? They do it while pretending to protect us, when the real victims are the citizens, innovators, and movements who lose the tools to connect, expose, and resist.

The great data lockdown begins

The open internet’s promise was simple: a place for the free transmission and sharing of ideas, knowledge, and collaboration, gleefully ignoring borders. Data zipped across oceans in milliseconds, letting researchers in Europe pool genomic sequences with labs in Asia, journalists in New York swap tips with sources in Nairobi, and activists anywhere build networks that no single regime understood how to surveil or, indeed, silence. Lately, it feels like that era is coming to an end, not with a bang but with a blizzard of regulations that demand data stay home, or get a passport stamped by suspicious bureaucrats.

In the United States, the turning point came with Executive Order 14117 in February 2024, when federal agencies were directed to choke off bulk transfers of Americans’ sensitive personal data, think genomic profiles, precise geolocation histories, financial details, and biometrics, to “countries of concern” such as China, Russia, and Iran. By October 2024, the Justice Department unveiled rules to create a licensing regime that treats such data flows as potential national security time bombs. These measures block adversaries, sure, but they also normalize the idea that any cross-border data movement is inherently suspicious in nature, forcing companies to rethink global operations or face crippling penalties. [Reuters]

The European Union, meanwhile, is waging its own war on openness under the banner of our titular “digital sovereignty.” The EU Cloud Services (EUCS) certification scheme, fiercely debated through 2025, promises to certify trustworthy providers, yet has devolved into a political football. Critics, including the EU Institute for Security Studies, strenuously warn that it divides the market along ideological lines, favoring European companies while walling out U.S. giants like Amazon and Microsoft unless they conform to stringent sovereignty demands. So, what starts as a technical standard quickly becomes a blunt weapon of geopolitical force: non-compliant services are throttled, data are repatriated, and the open cloud ecosystem is shattered into neat, EU-approved boxes.[EUISS]

Of course, the story would not be complete without China, which, never shy about exerting control while keeping its cards closer to its chest, plays both sides. While issuing guidelines in April 2025 to ease certain financial data outflows for the convenience of business, Beijing simultaneously rolled out a set of “safety standards” in September demanding rigorous assessments for any cross-border personal information processing. The result is binary: a selective openness for multinationals who agree to play by the rules, and an iron-fisted lockdown for everything else. This regulated hypocrisy—facilitating industrial flows with one hand, strangling citizen data with the other—informs the true nature of the game: sovereignty isn’t about protection, but who gets control of the data, to wield it as a tool of state power. [Reuters]

Read: Who writes the code rules the world

Localization: slow strangulation

Data localization is perhaps the sharpest blade of this winter. Governments mandate that key information such as health records, financial transactions, and even device telemetry—pinpointing your location—must live on servers within national borders. The pitch to citizens is one of protection: keep the people’s data under “your” laws, inside, safe from foreign spies, outside. The reality, of course, is the pursuit of domestic control: if the servers are local, so is the backdoor. In this sense, police warrants become server-room raids, and intelligence agencies get bulk access to data without the pesky hassle of international subpoenas.

Let’s take things from the U.S. perspective again. EO 14117 doesn’t politely ask for localization, it skirts around that preamble by prohibiting “covered data transactions” that could expose bulk sensitive data to possible adversaries, so companies follow suit by scrambling to segregate American data, building redundant infrastructure, or abandoning their global services altogether. And, while a purely commercial reaction, this also enacts a human cost: a biotech startup sharing genomic datasets for cancer research, for example, suddenly faces draconian export controls, as if seeking to cure disease is first and foremost a “security risk”. Such heavy market actions also naturally thin out market competitiveness, since small developers ditch cross-border collaboration when dealing with nightmarish compliance paperwork, which only Big Tech can afford to manage.

Read related: World 2.0: inside the architecture of new trade blocs

Europe’s localization push is subtler but no less vicious. The EUCS scheme isn’t just about encryption or audits, but is peppered with political criteria that let regulators enforce the notion, once again, of internal protection over external threat, vetoing providers based on ownership or foreign ties. An August 2024 EU-China pact on industrial data flows illustrates the double standard: grease the wheels for corporate data when it suits economic goals, but hoard personal information like state secrets. [Reuters]. We engage a spiral of insularity then, when ordinary Europeans wake up to a narrower internet, one where perfectly innovative tools from abroad are blocked not for being pragmatically unsafe, but for being insufficiently European.

China’s model is, as the blueprint for authoritarian localization, both eminently expected and, in contrast to our prior examples, actually more straightforward in its application. The 2025 financial guidelines permit outbound flows only after “security assessments,” effectively turning every transfer into a test of national loyalty. Personal, secure data? Forget it. Strict standards ensure it stays within the Great Firewall. And, while this is touted as defense, given the nature of that firewall, it is simply domination, ensuring the government’s grip on information that could fuel internal dissent or embolden economic independence.

For the state-capacity backdrop—how governance becomes system design, read: China’s next five-year plan: AI moves from slogan to system

A blank check for overreach

“National security” is the magic spell, the deftly uttered incantation that justifies it all, stretched to cover anything in our digital lives. Genomic data could train adversarial AI? Block it. Geolocation histories reveal troop movements? Localize that. Financial ledgers reveal elite corruption? Assess it first, then root out according to allegiance. The U.S. rules exemplify this inexorable creep, and what begins as targeting adversaries such as China and Russia morphs into an operational framework where any bulk data export needs Washington’s blessing.

Orwell indeed.

EU debates tell the same story. Supporters of the EU Cloud Services Scheme frame it as a resilience measure, but think-tank research shows the underlying politics: cloud certification has become a proxy battle against the fear of U.S. dominance, with France and Germany backing national champions while smaller member states fear exclusion. Academic research on EU data sovereignty captures this contradiction with some clarity, seeing efforts to assert “autonomy” risking the fragmentation of EU interdependence, undermining the very research collaboration and progressively open trade that has underpinned Europe’s post-war prosperity. [Cogitatio]

The anger deepens once the double standards are clear. Governments that once tapped foreign data flows without hesitation now protest calls for reciprocal access; they demand backdoors into private-sector apps in the name of “safety,” then ban those same tools when encryption actually protects users. Legal mechanisms for robust oversight are thin to the point of being laughable: no independent audits, no sunset clauses, just emergency powers made permanent, all leaving citizens asked to put their blind trust in states that have already lied about mass surveillance.

The open internet under pressure

Restrictions on cross-border data flows don’t just reshape markets; they narrow the conditions under which openness functions at all, and it follows that the open-source projects feel this first. For example, a developer in Berlin working with collaborators in Bangalore on privacy tools now has to navigate between EU cloud certification rules or U.S. transfer bans; while investigative journalists pooling information across borders run into localization requirements that treat such information as a kind of regulated cargo. Even at the citizen political level, diaspora groups organizing on global platforms encounter a restriction of digital movement, a throttling as nationally operating telecoms are pressed to enforce “sovereign” priorities.

Scientific collaboration is affected in similar ways. During COVID, open data sharing emphatically demonstrated how amazing the speed of digitally enabled scientific collaboration can be, rapidly tracking the emergence of viral variants and pouring this data into real-time vaccine development. However, that experience of relatively borderless scientific urgency now sits uneasily alongside new genomic export restrictions and data-residency mandates. Researchers respond rationally, siloing datasets to avoid compliance risk, the result of which is slower progress, weaker replication, and less incentive to collaborate across borders in the first place. Transnational NGOs documenting human rights abuses face a comparable degree of friction, since shared mapping tools and archives become legal liabilities in the eyes of national policy, rather than open-source tools that multiplied the force of their collaboration.

The creeping feeling, an understanding of an unwelcome change, has entered our lives, eroding the everyday forms of digital openness we came to expect, or simply, admittedly, took for granted. Social networks struggle in an environment built around jurisdictional silos, privacy tools designed to route around censorship are reclassified as security threats, and the systems that survive are those with the scale and legal resources to absorb compliance costs or negotiate exemptions. Large cloud providers rebrand themselves as “sovereign,” while state-aligned platforms consolidate their position. Openness hasn’t quite disappeared entirely, but it’s definitely now playing with a disadvantaged hand.

Power, not protection

Governments increasingly treat open data flows not as a public good, but as a risk to political control. The irony is hard to miss, since the modern digital economy was built on cross-border data movement, yet once citizens, journalists, and rivals began using that openness to scrutinize power, the rules shifted. Measures sold as “safety” or “resilience” now function to narrow who can share information, collaborate across borders, or simply organize effectively. Oversight certainly has not kept pace, and powers granted under the expedient, often-fabricated banner of “emergency” routinely outlive the “crises” that justified them, with weak checks and expiry clauses that exist more on paper than in practice.

The consequences of all this are uneven yet concrete. Refugees find their personal data trapped in the jurisdictions they fled, often beyond their control or protection; while marginalized groups lose access to transnational networks that once offered visibility, coordination, and support. Startups and researchers in lower-income countries face rising compliance barriers, while large firms with legal teams and political access absorb the costs with ease. As regulatory measures benefit the larger, more influential companies, the creativity of the smaller, newer players is stifled.

The open web still exists, sure, but it feels under strain. In order to preserve it, it requires treating user control and interoperability as core policy objectives, not optional extras for the dominant platforms to market as key features. This means real oversight, clear limits on emergency powers, and standards that protect your rights without splitting the internet into separate systems. The alternative is a slower, narrower internet shaped by its jurisdictional walls rather than well-considered, shared rules.

So as we approach a new year, perhaps the freeze is already tangible: the incidence of delayed data flows, blocked services, and growing barriers between builders and users. If the reactive need for control continues to be presented as an issue of security, the temporary constraints imposed will harden into permanent features, and we will see the space for openness shrink accordingly.

Read this. Notice that. Do something.

Read this: Reuters reporting on U.S. Executive Order 14117 and the creation of a licensing regime for bulk data transfers; EU Institute for Security Studies analysis of the EU Cloud Services Scheme and its market-splitting effects; and Cogitatio researchon EU data sovereignty and the risks it poses to research collaboration and trade.

Notice that: Across very different political systems, the pattern is the same: “security” rules expand, oversight lags, and temporary measures quietly become permanent. Openness narrows not through a single ban, but through accumulated friction.

Do something: Treat cross-border data rules as power questions, not technical fixes. Ask who gains control, who bears the cost, and which forms of openness disappear first when compliance becomes the priority. Critical thinking!

Previously on GYST: Season of risks: how year-end jitters became the new normal

Next up: 2025: when technology stopped being a free-for-all